3 minutes, 45 seconds

tl;dr – Timekpr-Next Remote is an easy to use web app to add or remove time to users of linux login time tracking app, Timekpr-nExT

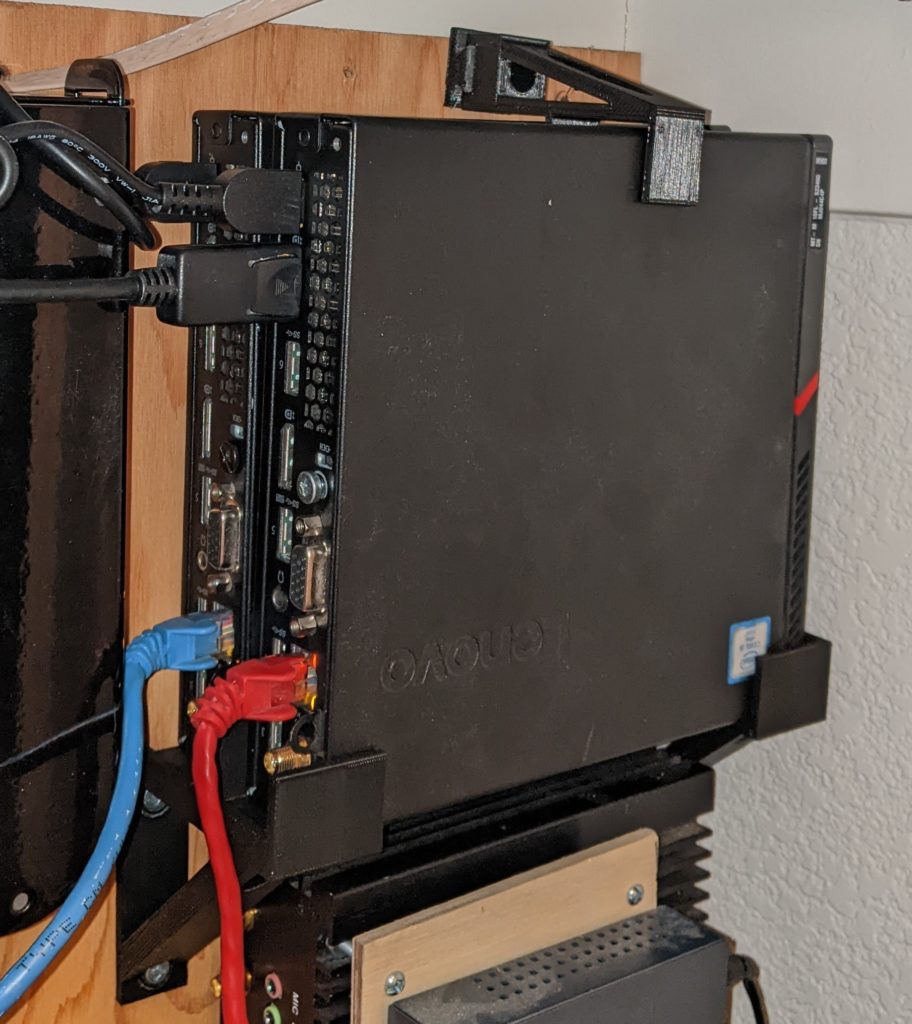

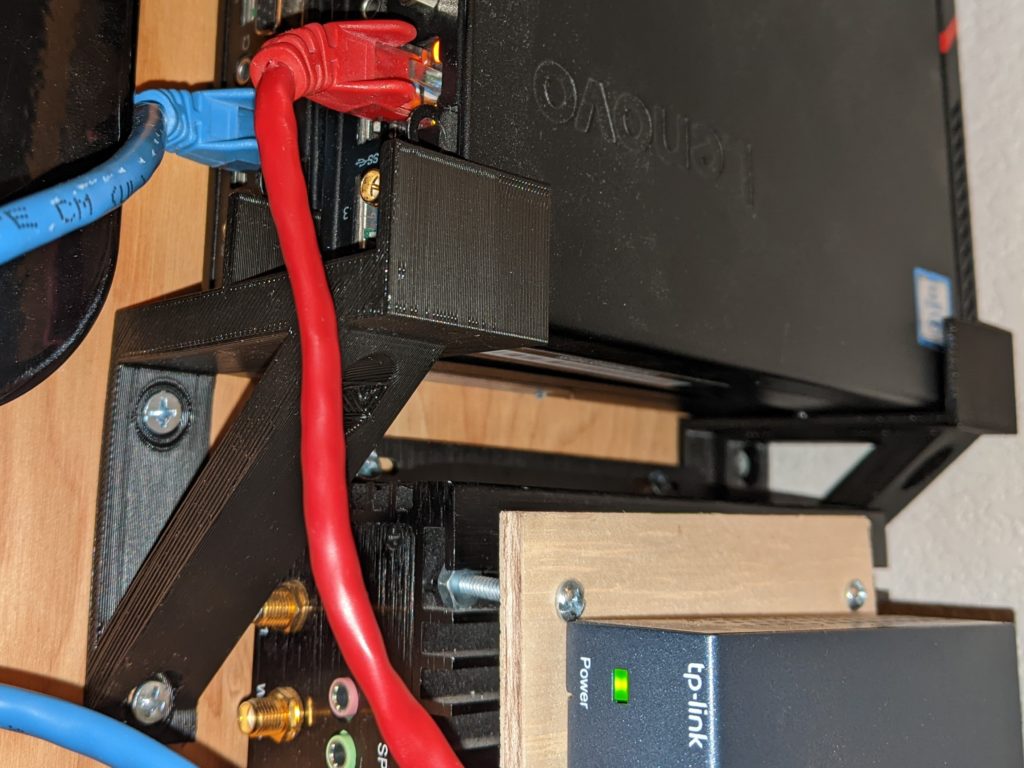

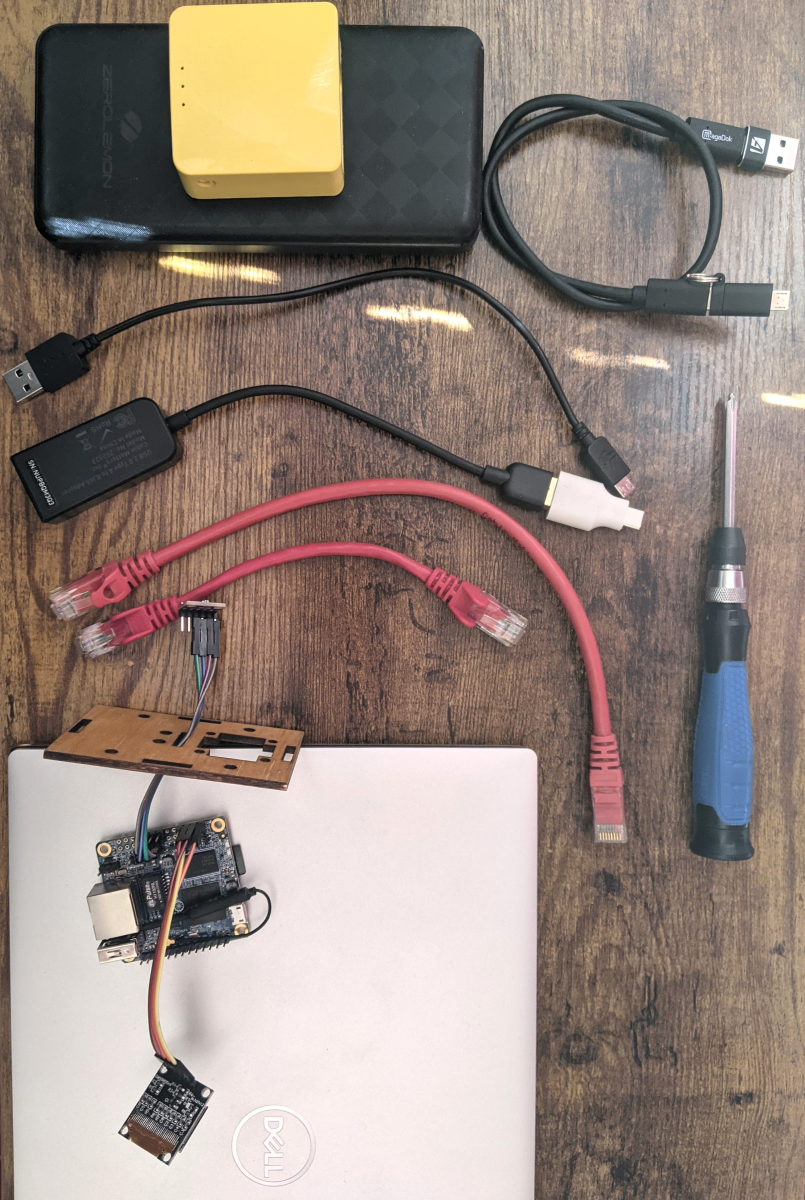

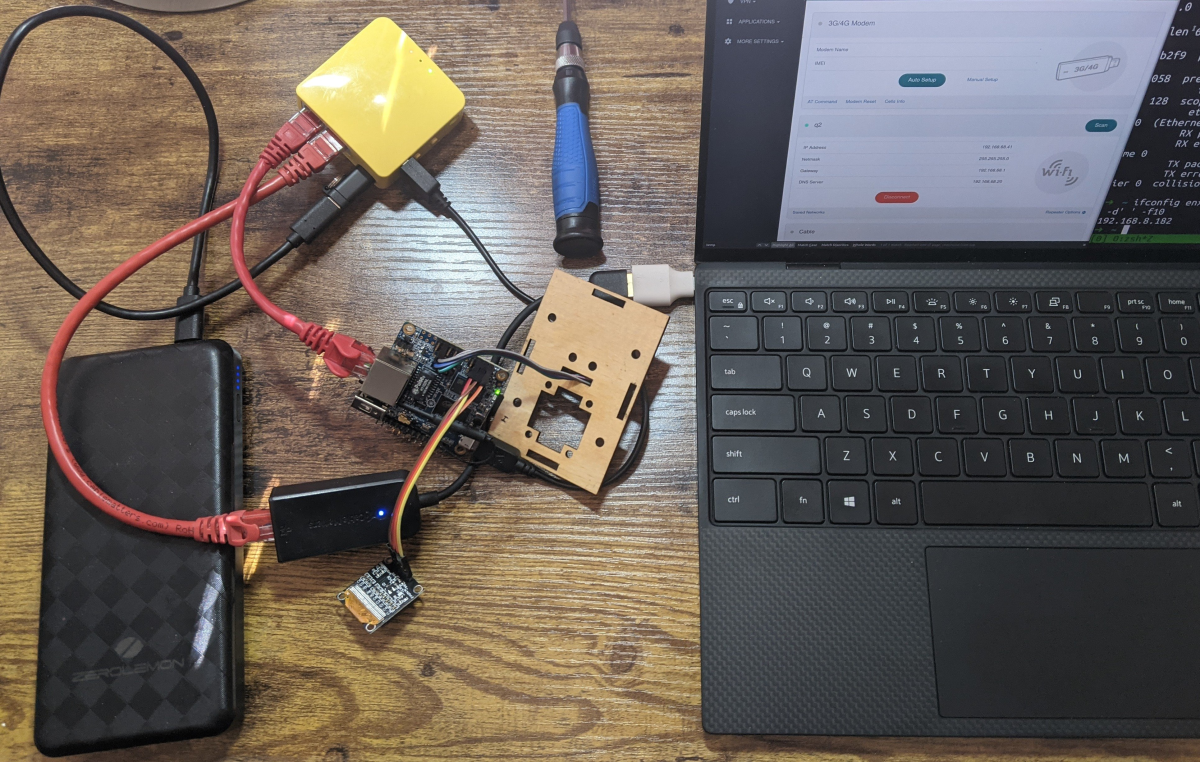

Recently, one of my kids got a drawing tablet and wanted to use it with Krita. Given they were on a Chromebook, we decided to repurpose an old Intel NUC i3 server with a clean Ubuntu 22.04 Desktop. Not only would this allow Krita to run well, but it would also enable video editing via KDEnlive and other hard-to-run on ChromeOS softwares.

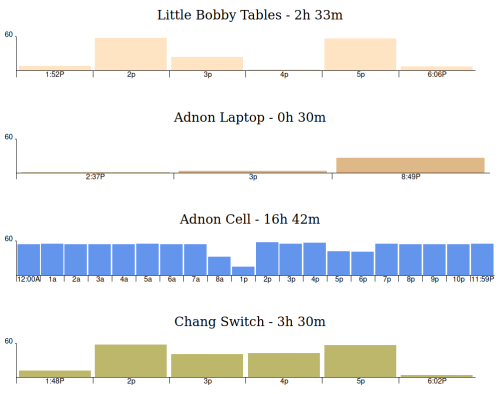

For some time now, we’ve been happily running Legba to track computer usage. However, we wanted something with a bit more teeth, so we settled on Timekpr-nExT (henceforth just “Timekpr”, but it’s the “nExT” one, not this out of date one or this waaay out of date one, k?). This is a great app that allows for a finite amount of time to be used per day, and it is relatively easy to add more time. Well, easy if you’re on a desktop. And you have SSH installed. And you know the login and password to each computer you want to control. So, not at all easy if you’re a busy parent who’s juggling managing kids and helping with school work and cooking dinner. So, not at all easy if you’re a parent, amiright?!

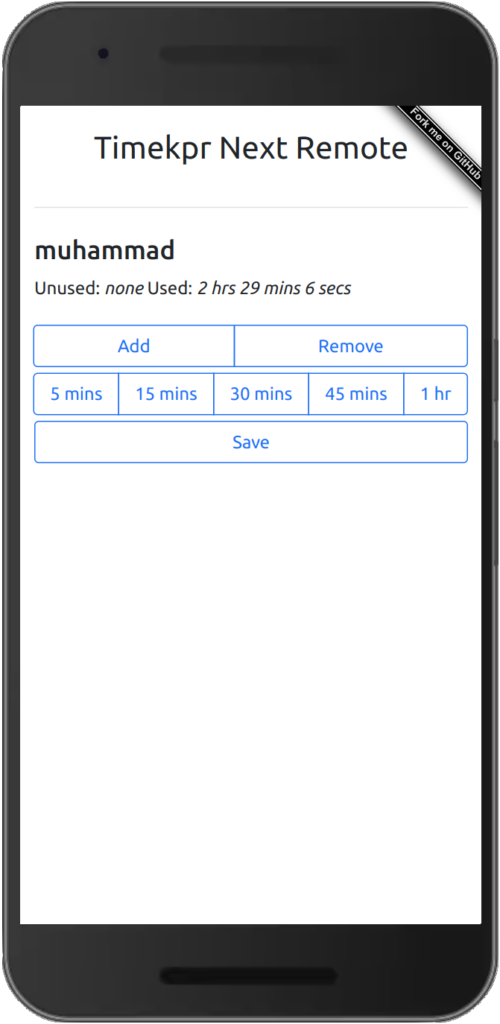

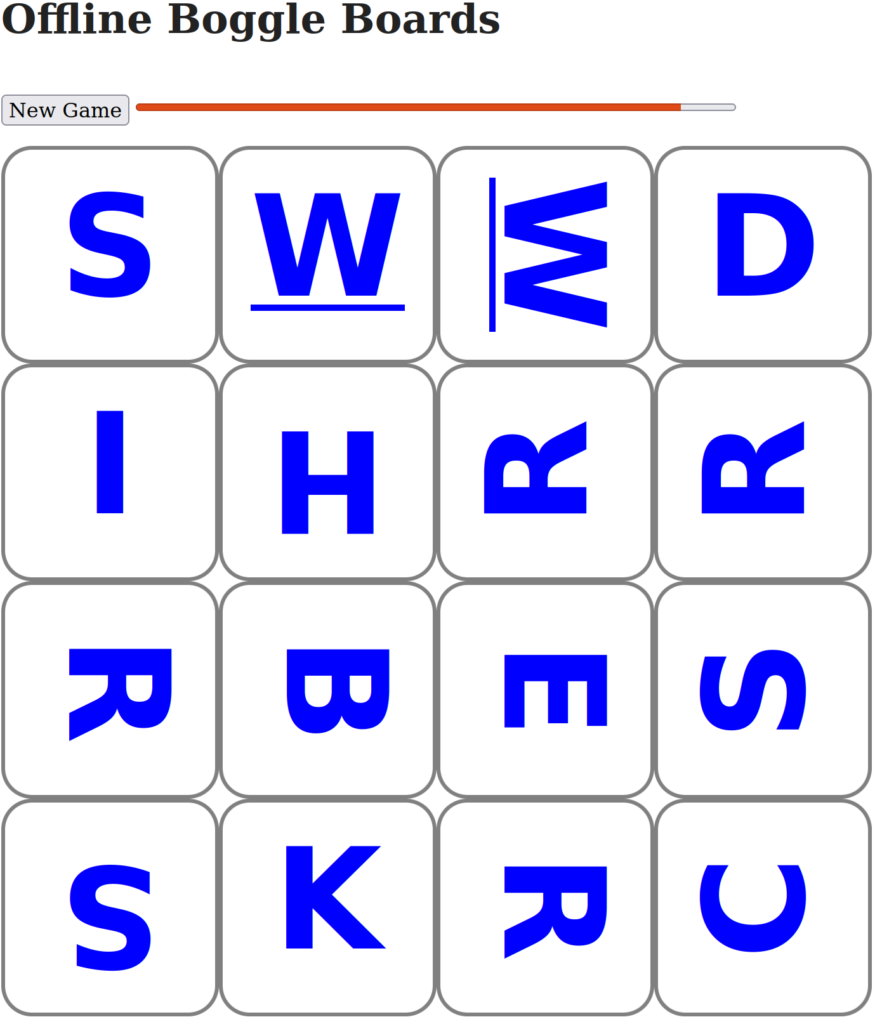

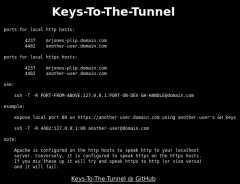

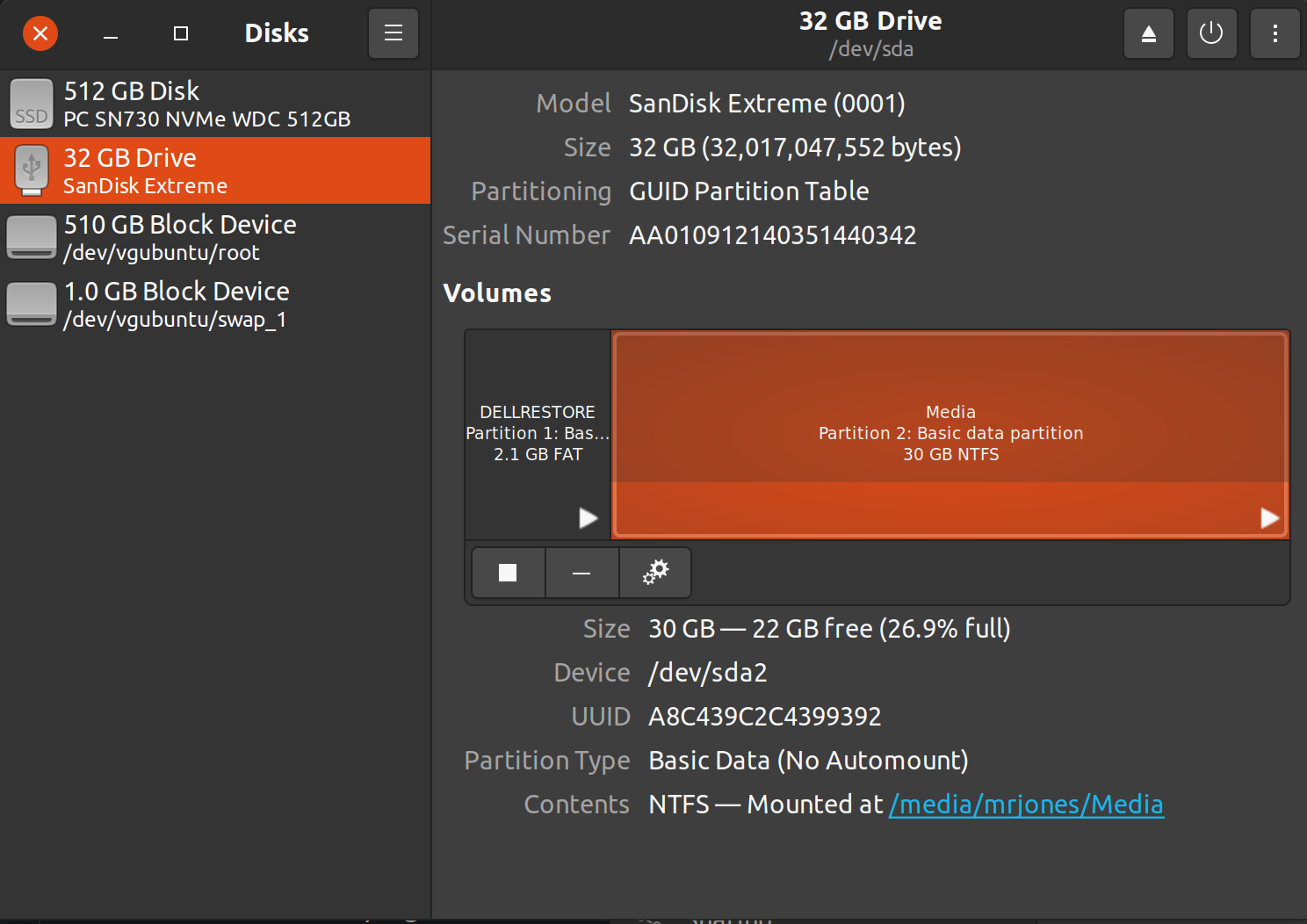

Enter Timekpr-Next Remote! This is a Dockerized Python app that allows you to easily update update your kids’ computer time right from the nearest parental phone or desktop device:

As you can see, for any given user (only one sample user, “Muhammad”, is shown here) you can easily add more time (or remove time if you fat fingered the add time). Given how ubiquitous phones are, having a self hosted, non-cloud way to easily control time has been a win for us. Video chat with grandma after you’ve done your homework and used all your time allotment? Add -> 30 min -> Save, it only takes 3 seconds \o/

SSH FTW

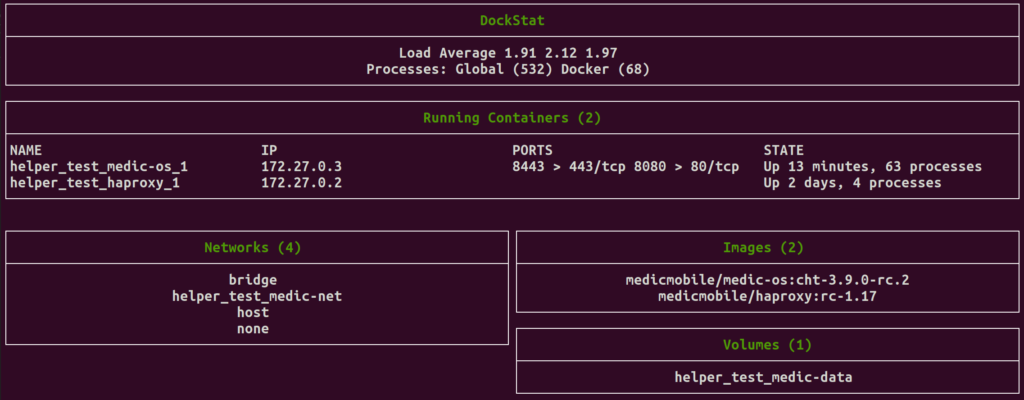

Let’s have a look under the covers at how all this works.

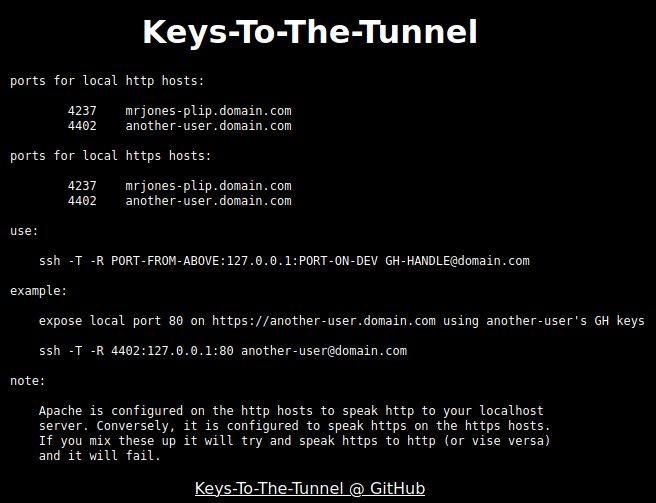

I should start out by saying that Timekpr is licensed GNU GPL v3 and they post the code online. I did consider adding an HTTP server to handle REST requests to core package up stream. Then I realized I’d have to do the securely and that I’d have to deal with certificates and such. A greenfield approach would be quicker (grok only my code, not someone else’s) and more secure, but not as clean (I used SSH vs REST). With that out of the way…

Timekpr Remote uses SSH to communicate! This is clunky, indeed. But, Python has a wonderful SSH library in the form of Fabric (which turn uses the awesome Paramiko) which took a lot of the really clunky parts out and made them pretty elegant.

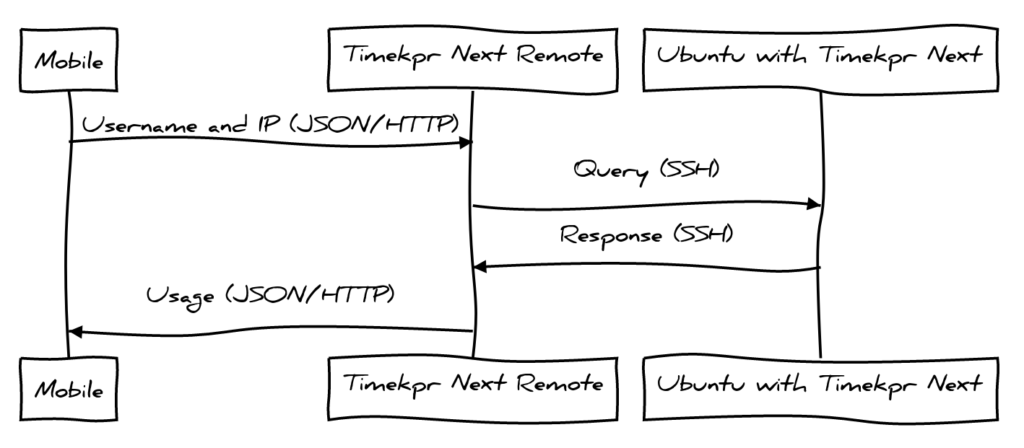

Here’s the flow of data when we load the page and want to get the current usage of Mumammad:

All data flows this way, and there’s three AJAX endpoints which the web client sends via this flow:

- Get all Users and IPs

- Get usage for a user and IP pair

- Add/Remove time for a user and IP pair

This isn’t a perfect REST API, but it’s OK enough. It was my first time writing an app in Flask, so it was fun to figure how to do different URL handling and JSON returning and such, even if my REST uses some GETs instead of POSTs/PUTs

“s” in Timekpr-next Remote is for “Security”

While we an trust SSH between Timekpr Remote and Server, you may note a lack of authentication between the mobile handset and Timekpr Remote. Indeed, there is none. Here’s what I recommend:

Run something like Traefik or Caddy in another docker container. From there you can bind the timekpr-next remote server to the host docker IP with something like TIMEKPR_IP=172.17.0.1 docker compose up -d. It will no longer be available on the network, only only via the revers proxy you set up.

You can then either use basicauth (eg in Caddy) or what I did is make a host name that is un-guessable like https://user-time-8957446623432192758492038.domain.com. Everyone just bookmarks this. Even if your kids see the URL, they won’t be able to remember it.

For those that give SSH the stink eye (smells like an injection attack, eh?), you can harden this too. Ensure the SSH user on the server can not do anything more than run timekpra by restricting it in the authorized_keys file on each client. This will ensure if extra variables are passed (though we do explicitly protect against this), they won’t do any more harm.

Like and Subscribe that video

Here’s a 9 second video demonstrating it in situ!

Jokes on you though, there’s no like and subscribe because it’s not actually YouTube (though GitHub is pretty close to a social media network…)