7 minutes, 41 seconds

Intro and LXD install

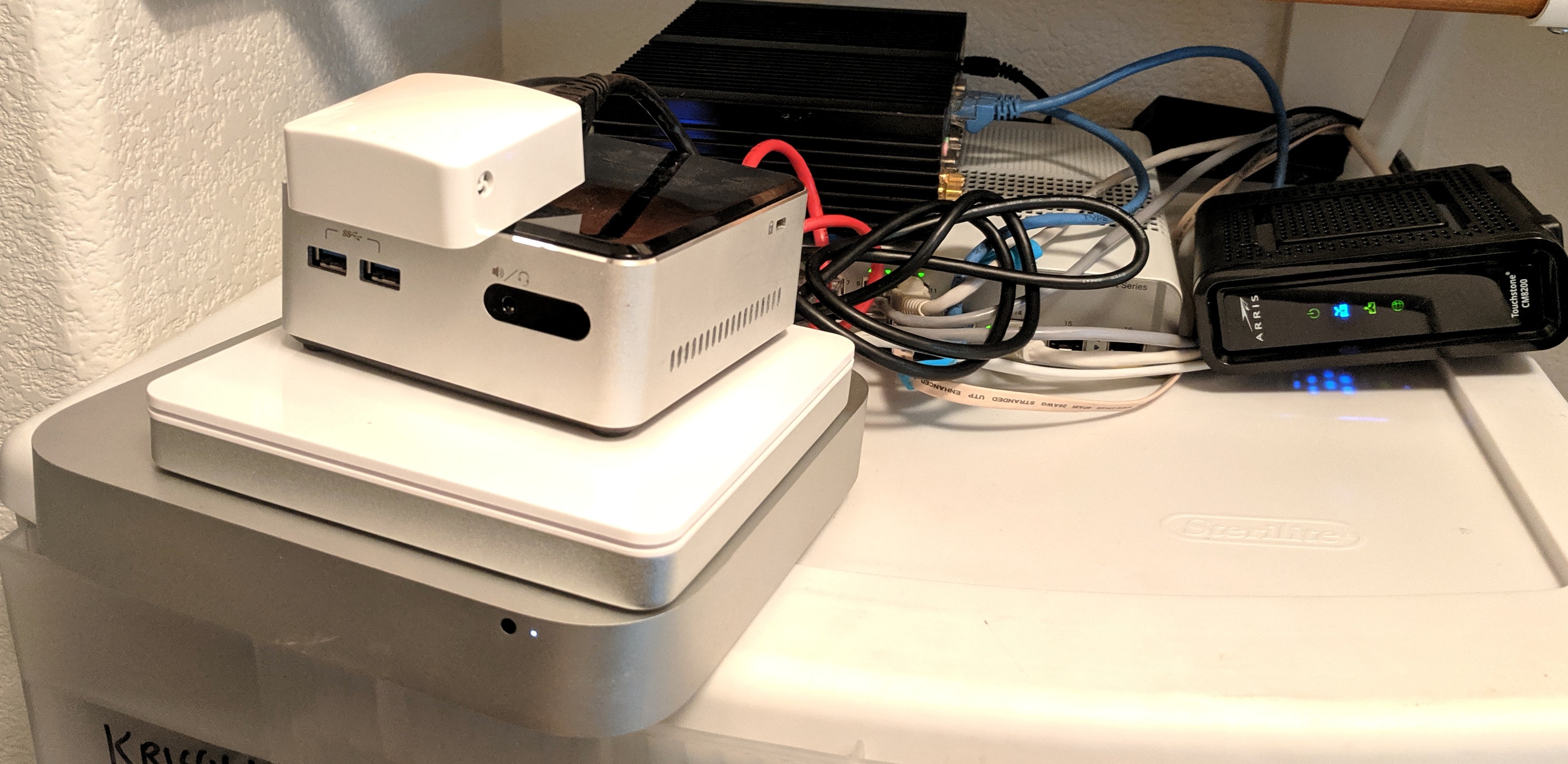

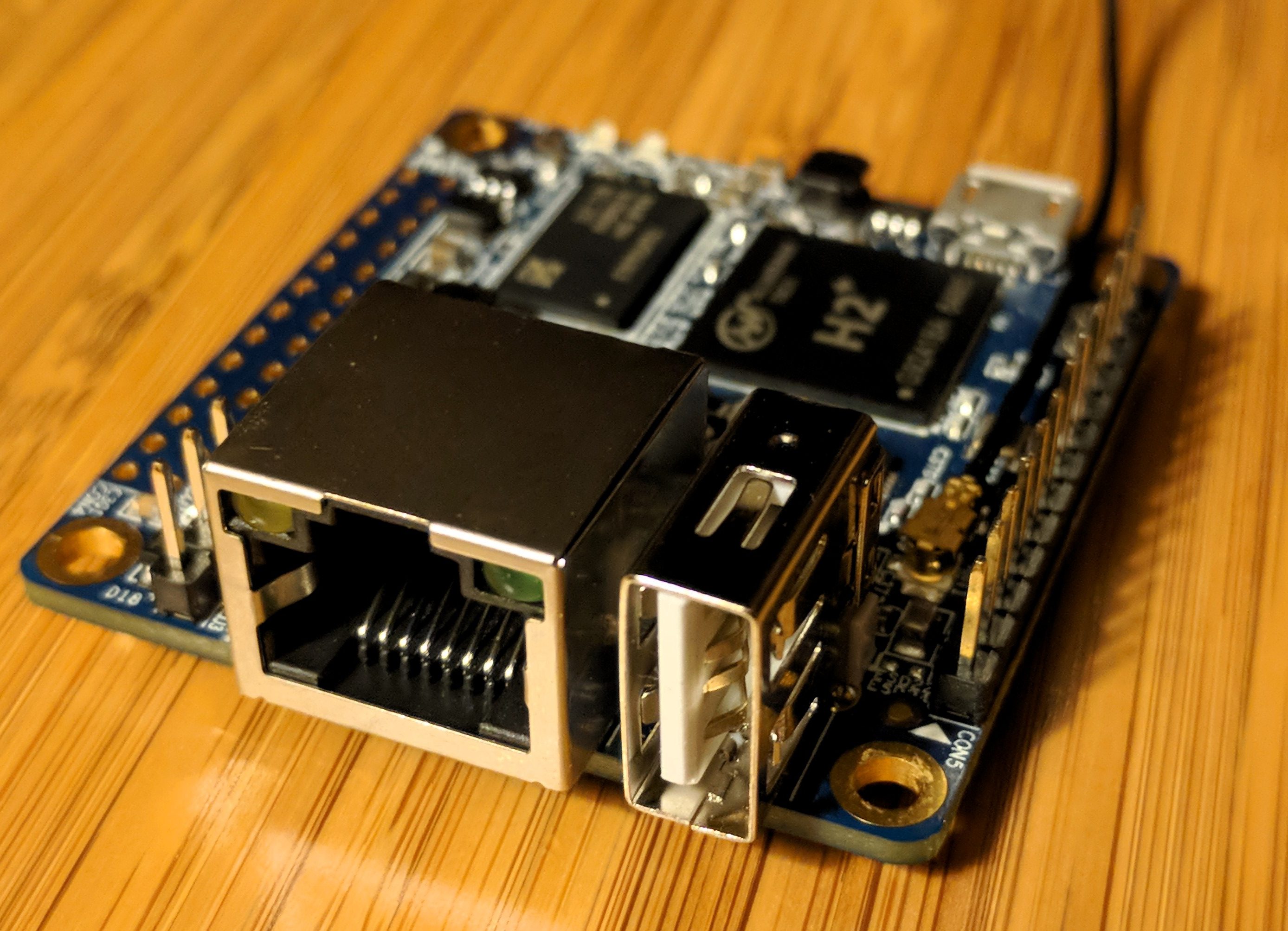

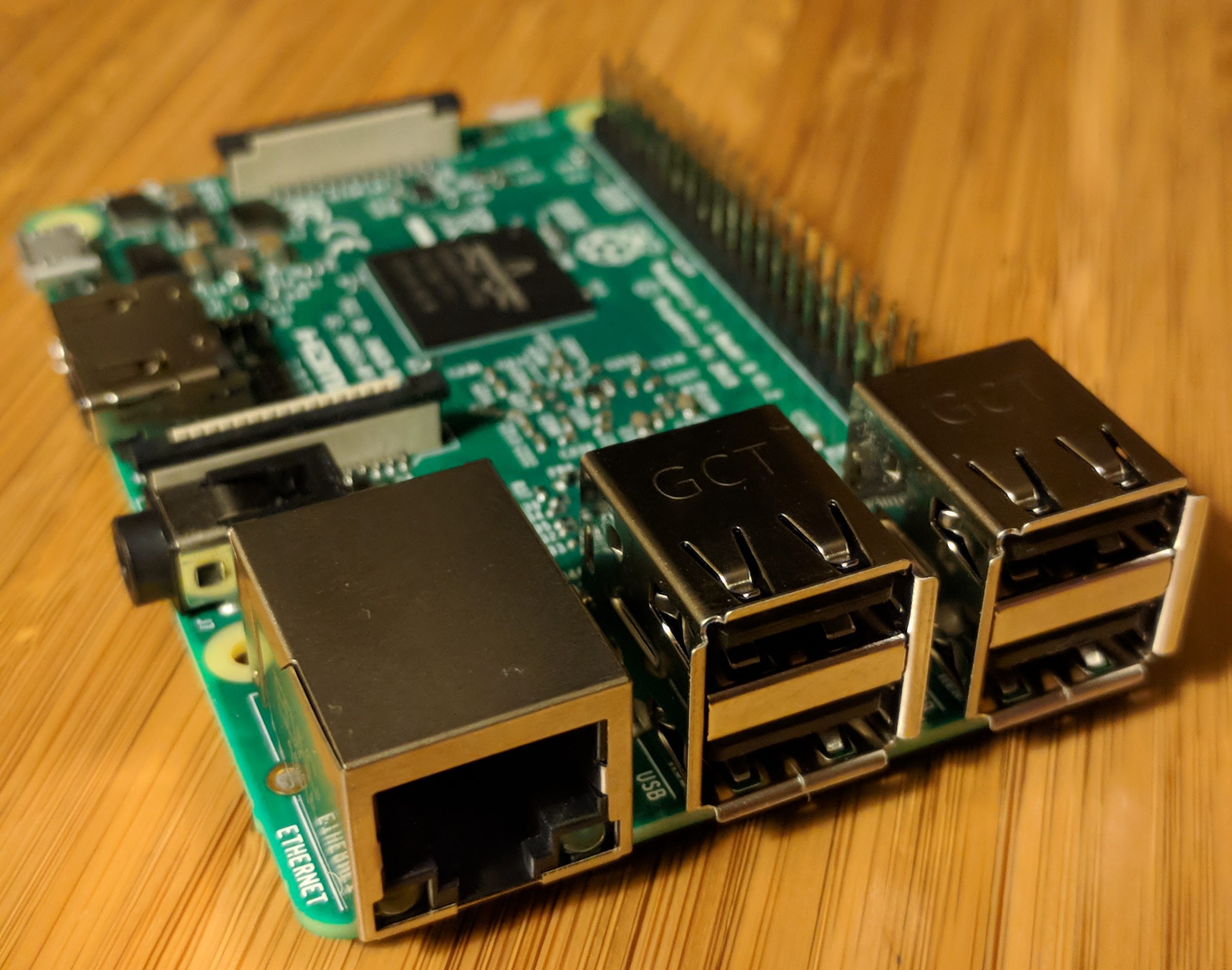

At work recently I was charged with rebuilding a bare metal host. Beyond needing to follow our security best practices and be well documented, it was left up to me how to do it. I had my own needs for test VMs and there was a pending request for a VM* for semi-production instance. This meant some VMs* would be fine in a traditional NATed environment, where they had no publicly accessible interfaces, and others would need full fledged public IPs. (* – I’m using “VM” liberally in this post. These are technically LXD containers which use the host kernel.)

Given my penchant for LXD, I’m guessing you can see where this is going ;) If you don’t know my penchant, check out these posts, specifically, “From zero to LXD: Installing a private compute cloud on a Cisco C220 M4SFF“.

I won’t go as into nitty-gritty detail on the hardware setup (this time with an older c220 M3 LFF instead of the new M4 SFF), but I set up the system very similarly, but was forced to use a RAID10 set up on 4 drives – no fancy ZFS set up this time. I’ll see some performance and features lost as LXD was configured to just use filesystem (/var/lib/lxd), but given I have bare metal in a colo with as many VMs as I want, I’m happy ;)

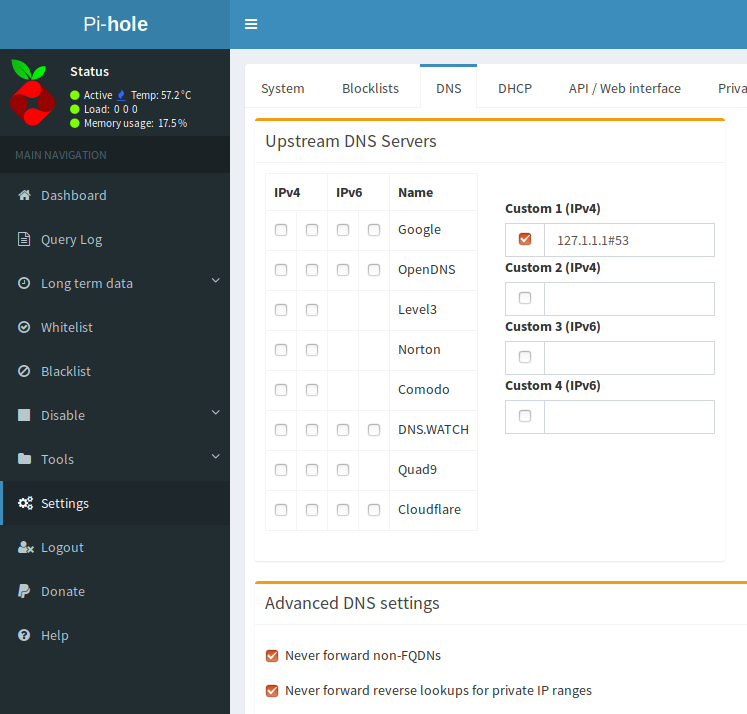

After installing Ubuntu 18.04, giving it a static IP and running our Ansible hardening roles against it, I was ready to configure LXD. The nice thing about LXD is that you can have as many container profiles as you want. This means I can zip through the default lxd init process to have VMs which are behind NAT and then trivially add a new profile that allows hosts to have a public IP after that.

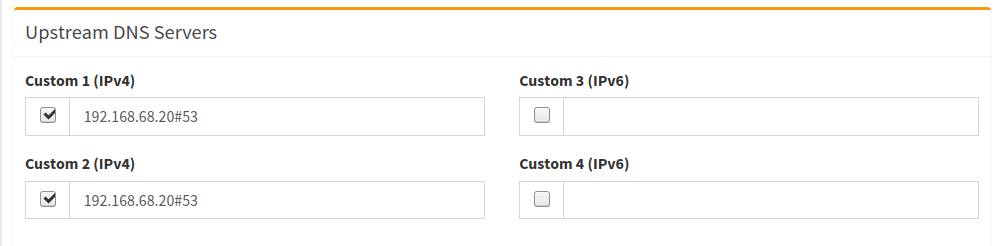

The initial config of LXD looks like this:

Would you like to use LXD clustering? (yes/no) [default=no]:

Do you want to configure a new storage pool? (yes/no) [default=yes]:

Name of the new storage pool [default=default]:

Name of the storage backend to use (btrfs, dir, lvm) [default=btrfs]:

Create a new BTRFS pool? (yes/no) [default=yes]:

Would you like to use an existing block device? (yes/no) [default=no]:

Size in GB of the new loop device (1GB minimum) [default=15GB]:

Would you like to connect to a MAAS server? (yes/no) [default=no]:

Would you like to create a new local network bridge? (yes/no) [default=yes]:

What should the new bridge be called? [default=lxdbr0]:

What IPv4 address should be used? (CIDR subnet notation, “auto” or “none”) [default=auto]:

What IPv6 address should be used? (CIDR subnet notation, “auto” or “none”) [default=auto]:

Would you like LXD to be available over the network? (yes/no) [default=no]:

Would you like stale cached images to be updated automatically? (yes/no) [default=yes]

Would you like a YAML "lxd init" preseed to be printed? (yes/no) [default=no]:

After that, and HUGE thanks to this concise post by Simos Xenitellis, we can now configure a new profile with Macvlan for VMs that need a public IP. Simos’ post really covers this nicely (I even use their same code snippets ;), but by copying the default profile (lxc profile copy default lanprofile) and then setting the the nictype (lxc profile device set lanprofile eth0 nictype macvlan) and the parent (lxc profile device set lanprofile eth0 parent enp5s12) on the new profile, we’re ready to go. Note that this assume your bare metal’s nic is enp5s12 and your LXD VMs use eth0 (the default).

Network types: NAT, Bridge & Macvlan

But wait, what is Macvlan? And, just so we’re all clear, how does it differ from the default NAT set up or the fancy bridged set up in my earlier post? Let’s break it down:

- Network Address Translation (NAT) – You’re very likely using this right now to read this post ;) NAT is what enables us to easily share a connection to the Internet with out everyone having a public IP. Have you seen IPs that start with 10.x.x.x, 172.x.x.x or 192.x.x.x? While not exclusive to NAT, they’re the most common IP ranges used in conjunction with it (See RFC 1918 for TMI). NAT allows a gateway to hand out these IPs which then can send traffic out to the Internet and, by modifying the ports used, send the responses back to the NATed host who originally made the requested.

NAT is what LXD uses when you accept all the defaults in lxd init. This is super handy for testing and development! As well, we can use it to our advantage with a reverse HTTP proxy in production – more on this below.

- Bridge – Bridges are a layer 2 connection that makes it appear as all devices are on the same network. This is convenient when you want all devices to work with the same IP range, either with public IPs or in your NATed network. This is how I set up LXD in the prior article. Any time a VM is created in LXD, it can see all hosts, but it does take a slightly more complex network set up on the bare metal.

- Macvlan – I’ll quote this great write up on hicu.be to describe Macvlan, “[it] allows you to configure multiple Layer 2 (i.e. Ethernet MAC) addresses on a single physical interface. Macvlan allows you to configure sub-interfaces (also termed slave devices) of a parent, physical Ethernet interface (also termed upper device), each with its own unique (randomly generated) MAC address, and consequently its own IP address.”. This achieves the same result as bridges with one major caveat: host and VMs can not talk to each other. That is, your VMs won’t be able to talk to you bare metal LXD host and vice versa – caveat emptor!

Now that you know what the three setups are, and how easy it was to set up NAT (just accept LXD defaults) and how easy it is to set up Macvlan (3 command line calls) – let’s see what we can do with them!

Again per Simos’ post, we can easily create a new NATed VM and then a Macvlan VM like so:

lxc launch ubuntu: natVM

lxc launch -p lanprofile ubuntu: lanVM

To set a static IP on either host, assuming your running Ubuntu 18.04 like me, you’d just edit /etc/netplan/50-cloud-init.yaml. So let’s say I wanted to give the natVM IP .10 in the 10.x.x.x range that LXD gave me and use Quad9 for DNS. I’d edit50-cloud-init.yaml to look like this:

network:

version: 2

renderer: networkd

ethernets:

eth0:

dhcp4: no

addresses: [10.0.0.10/24]

gateway4: 10.0.0.1

nameservers:

addresses: [9.9.9.9]

This ends the part of the post where we talk about NAT and Macvlan both easily co-existing on LXD. Now on to what you might do with that set up! Specifically, how you might use Apache to forward on HTTP requests on a public IP to a NATed VM.

Apache reverse proxy

If you wanted to run lots of VMs, none of which needed a public IP, but a few needed to run a public service, you might wonder how to best do this? In my case, I had a small amount of public IPs, so burning one for every VM was a big waste. A better way is to just selectively forward some HTTP traffic from the bare-metal host’s public IP to a NATed VM’s IP. I’m an Apache kinda person, but this could be done with your web server of choice. It goes with out saying, but this trick will only work with HTTP traffic. I’ll speak to being able to SSH “directly” to any NATed hosts below!

Let’s get started by installing apache2 on the Ubuntu bare-metal host and enable some key modules:

apt install apache2

a2enmod ssl rewrite proxy proxy_http

Now edit /etc/apache2/ports.conf so that it’s listening on any ports you need – in our example it’s 3000 (Grafana) and 8086 (InfluxDB) so we’ll add just two lines:

<IfModule ssl_module>

Listen 443

Listen 3000

Listen 8086

</IfModule>

Assuming you want to run a service on 8086 (InfluxDB) and a service on 3000 (Grafana) on the VM we configured above on .10, you’d create a vhost file called /etc/apache2/sites-available/influxdb-int.conf and it would look like this:

<VirtualHost *:3000>

ServerName grafana-int.example.com

LogLevel warn

SSLEngine on

SSLCertificateFile /etc/httpd/ssl.crt/your.crt

SSLCertificateKeyFile /etc/httpd/ssl.key/your.key

ProxyRequests Off

<Proxy *>

Require all granted

</Proxy>

ProxyPass / https://10.0.0.10:3000/

ProxyPassReverse / https://10.0.0.10:3000/

</VirtualHost>

<VirtualHost *:8086>

ServerName influxdb-int.example.com

LogLevel warn

SSLEngine on

SSLCertificateFile /etc/httpd/ssl.crt/your.crt

SSLCertificateKeyFile /etc/httpd/ssl.key/your.key

ProxyRequests Off

<Proxy *>

Require all granted

</Proxy>

ProxyPass / http://10.0.0.10:8086/

ProxyPassReverse / http://10.0.0.10:8086/

/VirtualHost>

Note that this assumes you’re running everything over TLS (you should!!). As well, it assumes that your cert (SSLCertificateFile) and key (SSLCertificateKeyFile) are in /etc/httpd/ssl.key . Change these according to your specifc set up.

From here, you would follow the set up your apps to ensure they’re working locally on .10 and they should work on the public ip of your bare metal. Of course these all need to be configured to use TLS over the default HTTP. Huh – sounds like a whole “How to harden your TIG deployment” might be in order! (Of course, store any passwords encrypted when automating your deployments.)

Secure SSH to NATed LXD hosts

A final note on this set up is how to securely SSH to LXD hosts. Of course you can just SSH to your bare metal host and then bash in (eg lxd exec natVM bash), but how do you run your Ansible roles against these NATed VMs or another automation tool? SSH config files to the rescue!

Let’s assume your public IP of your bare metal is 1.2.3.4 and you want to ssh to the 10.0.0.10 IP we just set up above. All you need to do is create a file in your .ssh folder called “config” with 3 lines like this:

Host natVM

Hostname 10.0.0.10

ProxyCommand ssh -W %h:%p 1.2.3.4

With this set up, you can run ssh natVM and your config will automatically see the configuration to securely proxy the command through the 1.2.3.4 host through to your internal only .10 host. This works especially well when you have SSH Keys set up with SSH Agents.

Drop me a note if you have any questions!